Scavenger Elasticity: Memory Scaling with Load¶

Context¶

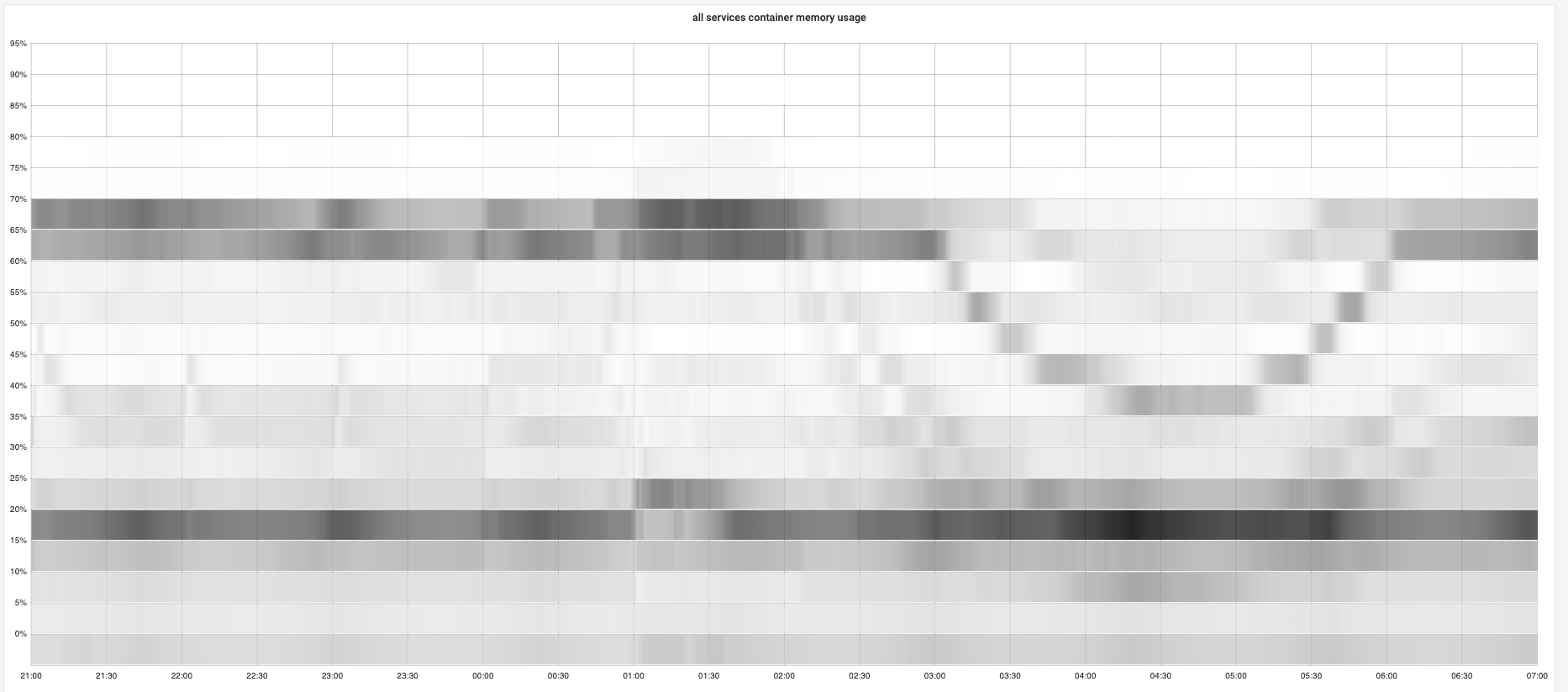

A common concern with increasing heap targets is: "Will memory ever come back down?" Our production data showed that the Go runtime's scavenger provides elastic memory behavior—scaling up during traffic spikes and releasing memory when load decreases.

Production Observation¶

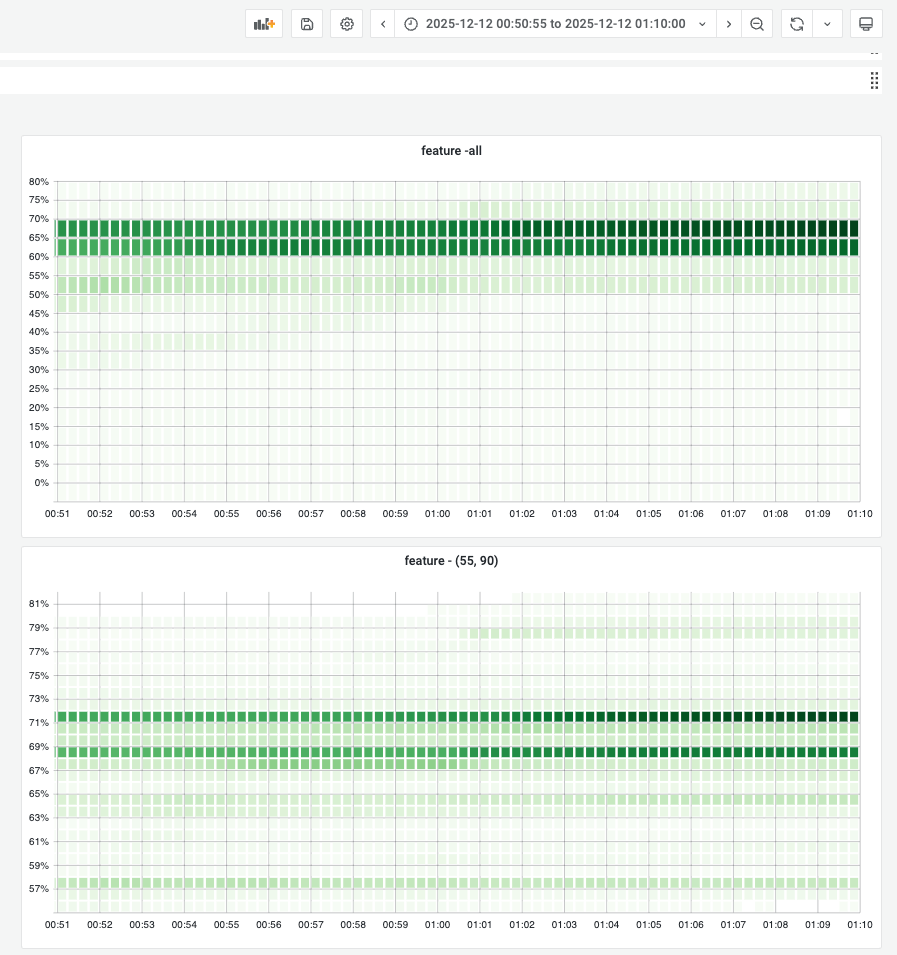

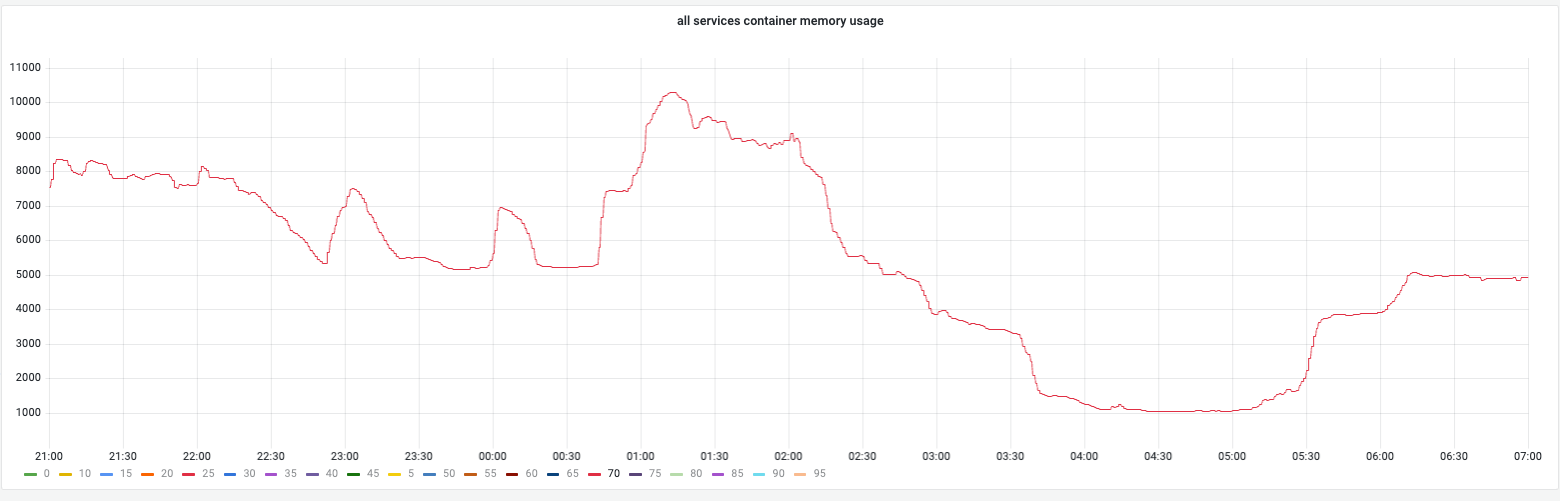

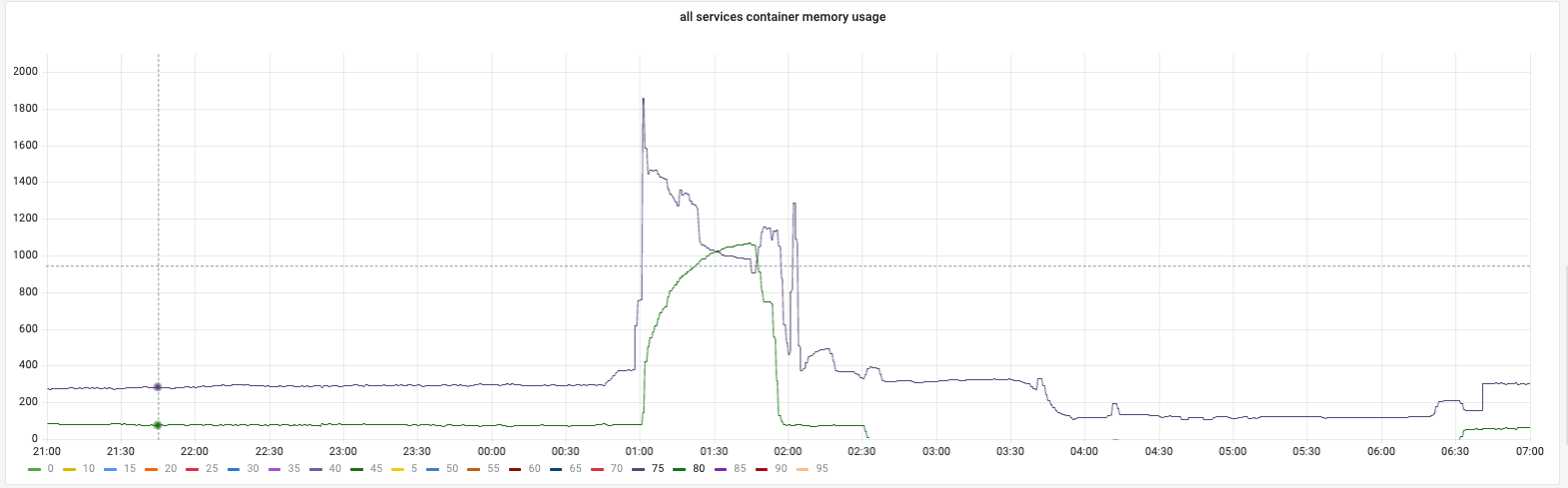

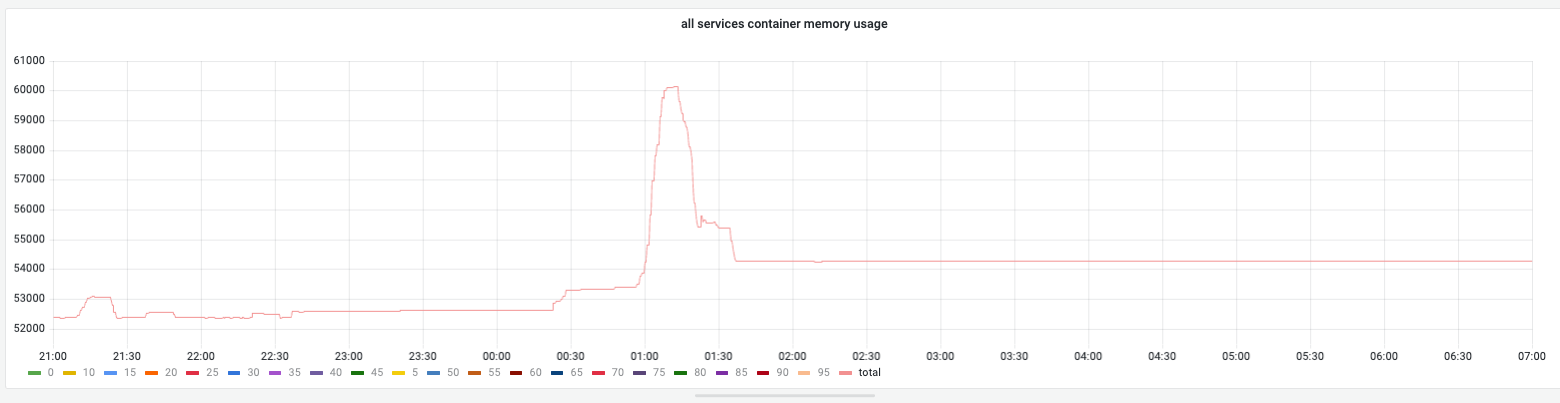

During Campaign (Traffic Spike)¶

Memory increased as traffic surged, with heap usage growing to meet the higher target.

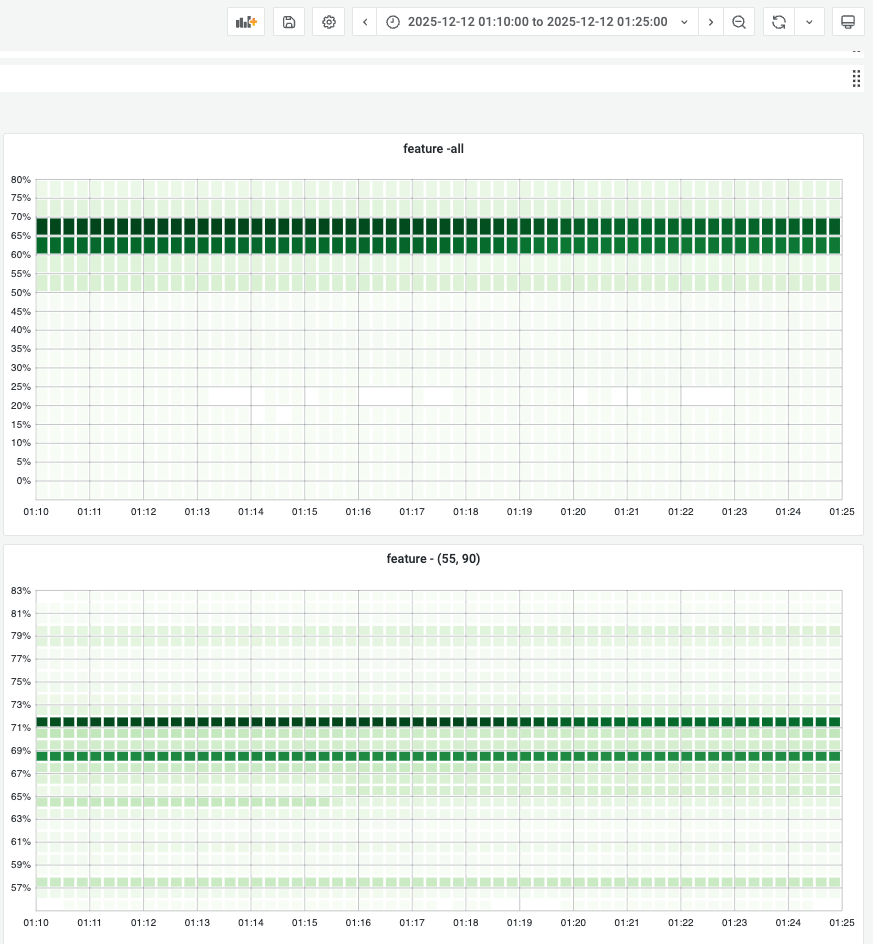

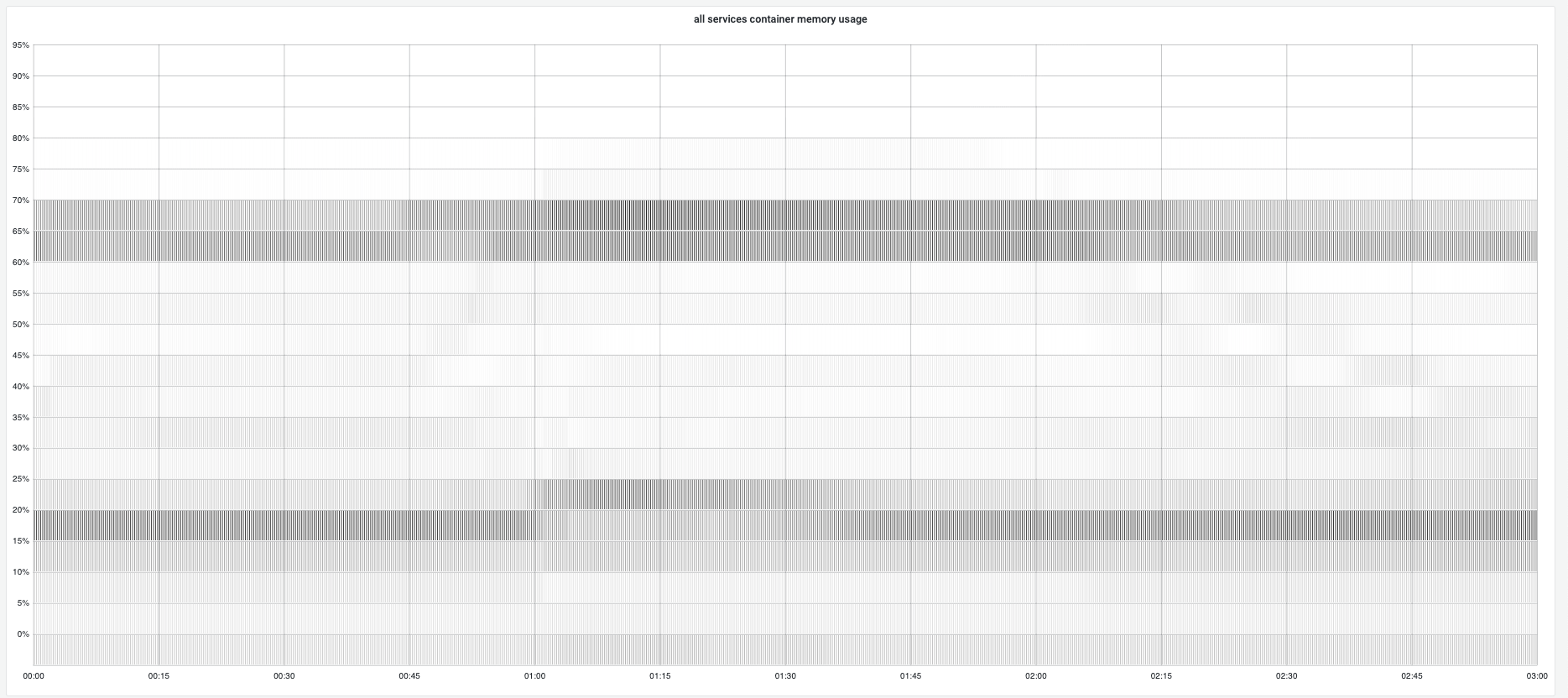

Post-Campaign (Traffic Normalization)¶

Observations: 1. Scavenging works: When traffic decreased, memory returned to OS 2. No fragmentation issues: Memory usage remained stable over time 3. Elastic behavior: Memory scales with load (increases during campaign, decreases after)

Why This Matters¶

Common Fear: "Memory Only Goes Up"¶

Many operators worry that once the heap grows, it never shrinks—leading to gradual memory bloat.

Reality: Elastic Scaling¶

The Go runtime's background scavenger continuously: 1. Monitors idle memory spans (not recently used) 2. Returns pages to OS when they remain unused for a duration 3. Adapts to load—retaining more during high allocation, releasing more during low allocation

Theoretical Validation¶

This confirms our understanding from Scavenging Theory:

- Background scavenger: Runs concurrently with mutators (doesn't block application)

- Idle memory detection: Tracks spans not used in recent GC cycles

- MADV_DONTNEED: Returns physical pages while keeping virtual mapping

- Retain policy: Without

GOMEMLIMIT, retains 10% buffer to reduce syscalls

Quantitative Evidence¶

From our campaign:

Time Series Data¶

Key metrics: - Peak memory: ~70% of container limit during campaign - Post-campaign: Returned to ~50-60% of container limit - Release rate: Memory returned to OS within minutes of traffic decrease - No fragmentation: Memory patterns remained clean over weeks

Practical Implications¶

For Bursty Workloads¶

Services with: - Diurnal traffic patterns - Campaign-driven spikes - Event-based load changes

Can safely use higher GOGC—memory will be released when load decreases.

For Capacity Planning¶

- Peak allocation determines memory requirement, not average

- No need to provision for "memory never released" scenario

- GOMEMLIMIT provides hard ceiling for worst-case allocation

For Cost Optimization¶

- Higher memory utilization is safe (memory returns when unused)

- Can over-provision memory for headroom without paying for unused capacity

- Elastic behavior enables right-sizing based on peak, not average

Edge Cases¶

When Scavenging is Less Effective¶

- Fragmented allocations: Many small objects across many spans

- High steady-state load: Constant allocation leaves few idle spans

- Short running processes: Not enough time for scavenger to work

When Scavenging Works Best¶

- Bursty traffic: Clear high/low allocation periods

- Large object allocations: Easier to return large contiguous spans

- Long-running processes: Scavenger has time to monitor and release

Related Topics¶

- Scavenging Theory - How the scavenger works internally

- Memory Lock Behavior - Steady-state memory behavior

- RSS vs Heap Target - How RSS reflects idle memory